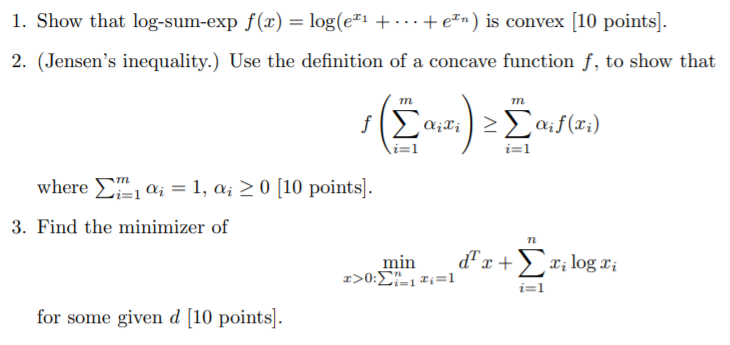

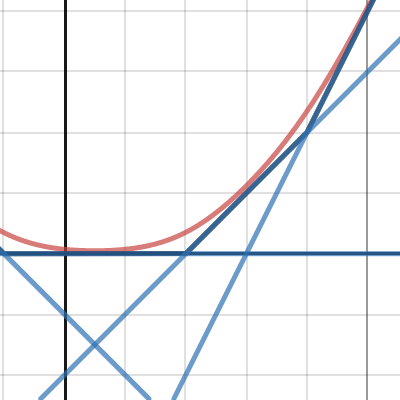

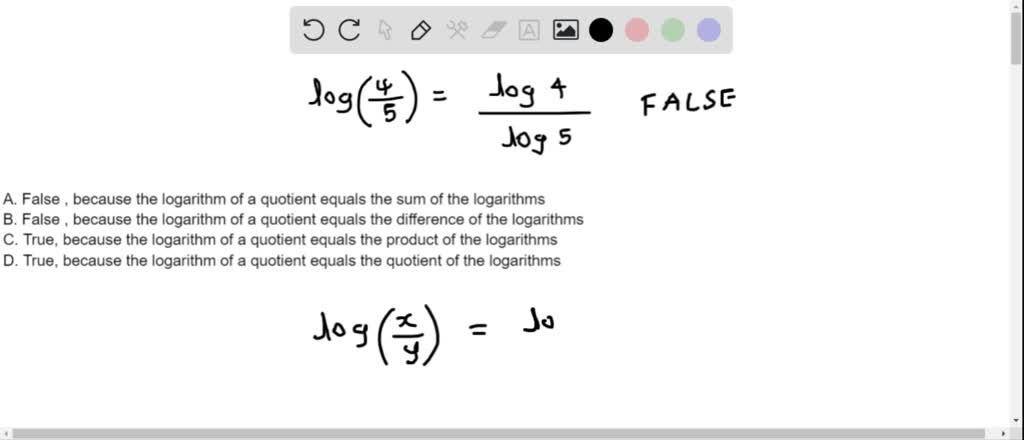

SOLVED: 1. True or false: The sum of 2 logarithms is equal to the quotient of the first log divided by the second log. 2. True or false: A function written by

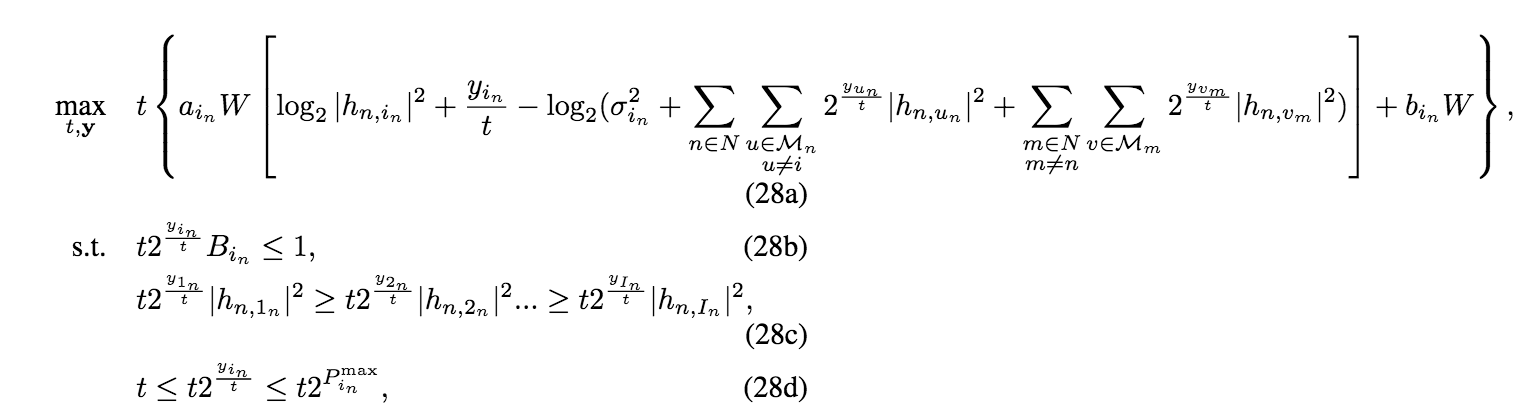

Convert the perspective function of log-sum-exp to cvx - CVX Forum: a community-driven support forum

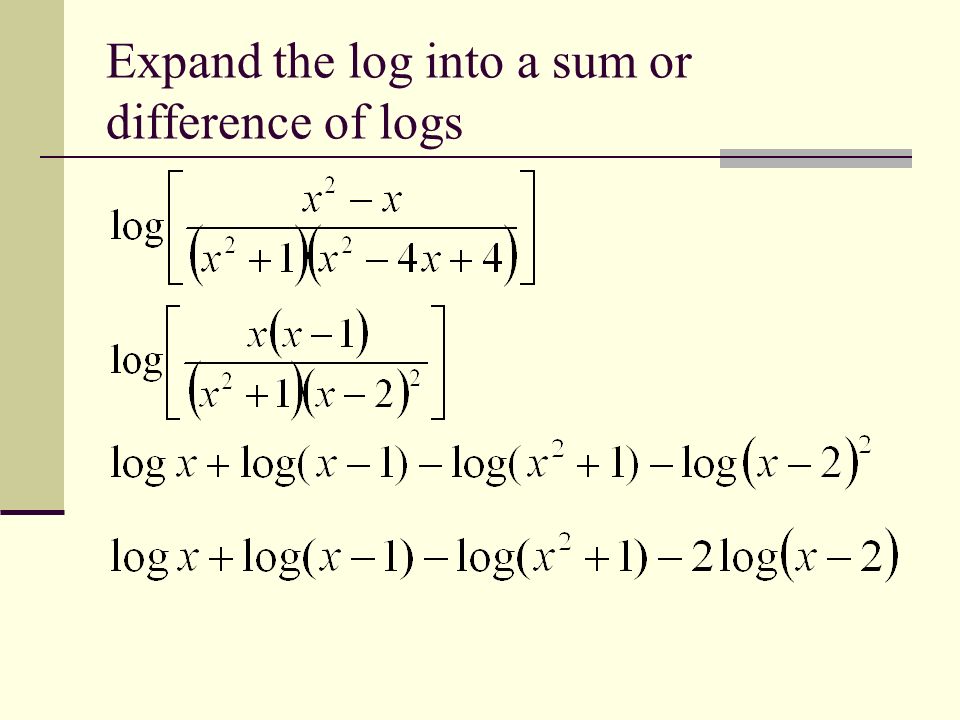

Section 6.5 – Properties of Logarithms. Write the following expressions as the sum or difference or both of logarithms. - ppt download

Underflow/overflow from improper log, then sum, then exp · Issue #5 · lanl-ansi/inverse_ising · GitHub

![PDF] Accurate Computation of the Log-Sum-Exp and Softmax Functions | Semantic Scholar PDF] Accurate Computation of the Log-Sum-Exp and Softmax Functions | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e9707d0614bc50ede075aca987e65eb06e86cda5/14-Figure5.1-1.png)